i.MX 8M Plus SBC Ported NPU TensorFlow Routine

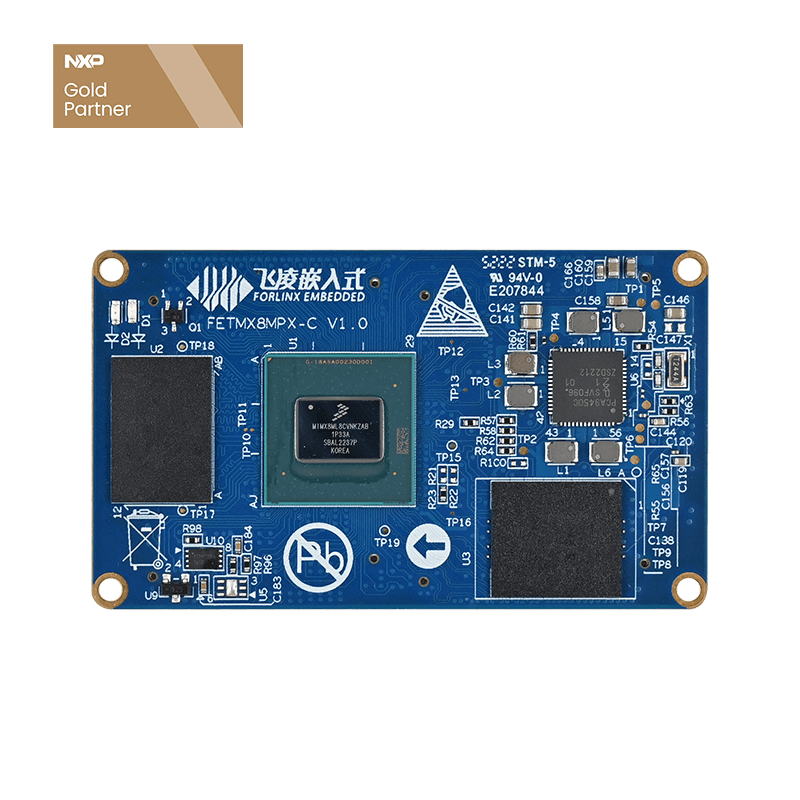

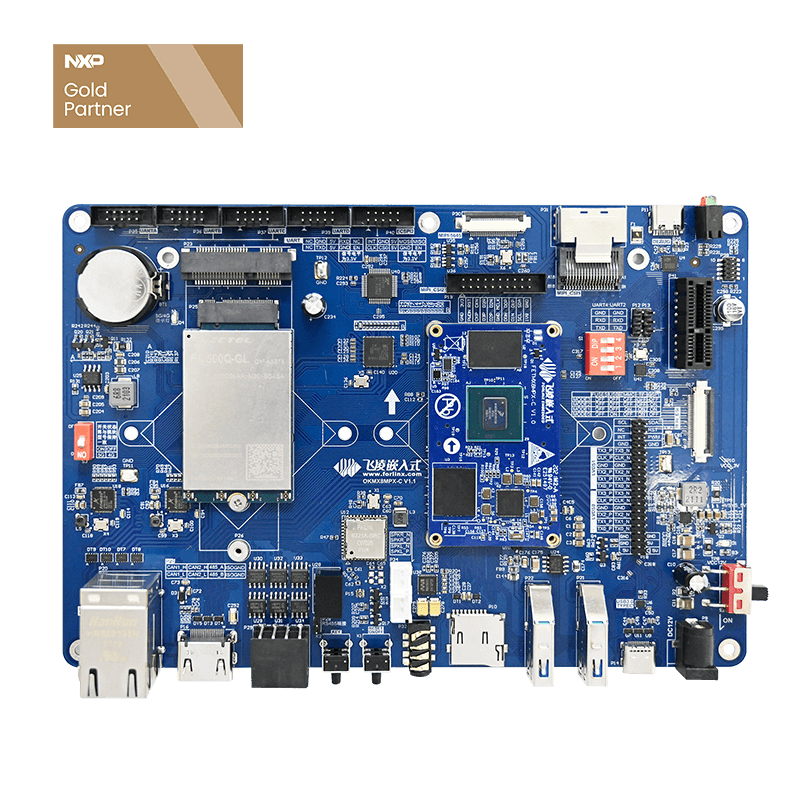

Forlinx embedded OKMX8MP-C single-board computer is based on NXP i.MX 8M Plus processor development and design, this series of processors focus on machine learning and vision, advanced multimedia and industrial automation with high reliability. It aims to meet the needs of applications such as smart cities, industrial Internet, smart medical care, and smart transportation. Powerful quad-core or dual-core Arm® Cortex®-A53 processor clocked at up to 1.6GHz with a Neural Processing Unit (NPU) running at a maximum speed of 2.3TOPS.

The hardware board used in this article is the Forlinx embedded OKMX8MP-C SBC, the system version is Linux5.4.70+Qt5.15.0, and it mainly introduces the transplantation of the official NPU TensorFlow routine.

1. The Image Recognition Routine of NPU

In the product manual provided by the OKMX8MP-C single-board computer, there is a chapter on the image recognition routine for the NPU on the board, which is located in /usr/bin/tensoRFlow-lite-2.3.1/examples of the EMMC partition, I will partition the EMMC mount finds the corresponding routine location for the /media partition.

Switch to EMMC startup, enter the /usr/bin/tensorflow-lite-2.3.1/examples/ directory, and run the test example:

Then switch back to the TF card system to run, prompting an error, the nnapi of the label_image program needs dynamic link library support:

libm-2.30.so libneuralnetworks.so.1.1.9 libnnrt.so.1.1.9 libArchModelSw.so libGAL.so libNNArchPerf.so libOpenVX.so.1.3.0 libovxlib.so.1.1.0 libVSC.so

Among them, libm-2.30.so is linked as ld-linux-aarch64.so.1, located in the /usr/lib/aarch64-linux-gnu/ directory, if it is in /usr/lib/aarch64-linux of the transplanted target system If the library file is not under -gnu/, an error will be prompted at runtime. Copy all the above dynamic link libraries to the correct location (/usr/lib and /usr/lib/aarch64-linux-gnu/), run again:

The result is that there is no error, the runtime environment is successfully transplanted, and the tensorflow routine can be performed next.

2. TensorFlow routine verification

First use Forlinx embedded official DEMO to do the verification, and the verification results are as follows.

0.160784: 639 maillot 0.137255: 436 bathtub 0.117647: 886 velvet 0.0705882: 586 hair spray 0.0509804: 440 bearskin

0.972549: 644 mask 0.00392157: 918 comic book 0.00392157: 904 wig 0.00392157: 797 ski mask 0.00392157: 732 plunger

0.380392: 583 grocery store 0.321569: 957 custard apple 0.0862745: 955 banana 0.0352941: 956 jackfruit 0.027451: 954 pineapple

0.52549: 922 book jacket 0.0705882: 788 shield 0.0705882: 452 bolo tie 0.0588235: 627 lighter 0.0352941: 701 paper towel

0.121569: 656 miniskirt 0.054902: 835 suit 0.0470588: 852 television 0.0470588: 440 bearskin 0.0392157: 679 neck brace

0.65098: 918 comic book 0.172549: 747 puck 0.0196078: 922 book jacket 0.0196078: 723 ping-pong ball 0.0117647: 806 soccer ball

0.678431: 918 comic book 0.0784314: 418 balloon 0.0470588: 880 umbrella 0.0470588: 722 pillow 0.0156863: 644 mask

0.184314: 585 hair slide 0.156863: 794 shower cap 0.0941176: 797 ski mask 0.0431373: 644 mask 0.0352941: 571 gasmask

The recognition results of the ten pictures are all presented in encoding. From the probability results of recognition, the computing power of the NPU of this iMX8MP development board from Forlinx is still very strong.

According to the official introduction, the i.MX 8M Plus processor has a built-in NPU, which can achieve 2.3 TOPS (Tera Operations Per Second, 1TOPS means that the processor can perform one trillion operations per second) arithmetic processing, and realize advanced AI algorithm processing. And NXP provides some specific use cases for the NPU of the i.MX 8M Plus processor, such as being able to process more than 40,000 English words, and the MobileNet v1 model can handle image classification over 500 images per second.